When we think of astronomy and looking at the night sky, the most common images we hold are either those of telescopes in our back gardens or perhaps the giant professional telescope observatories on top of the summits of mountains.

However, our universe doesn't only shine in optical light and in fact we can observe the universe with other different types of light waves from energetic X-ray light down through to the less energetic light at radio wavelengths.

The advantage with radio wavelengths is that we do not have to fly telescopes into space to do the astronomy.

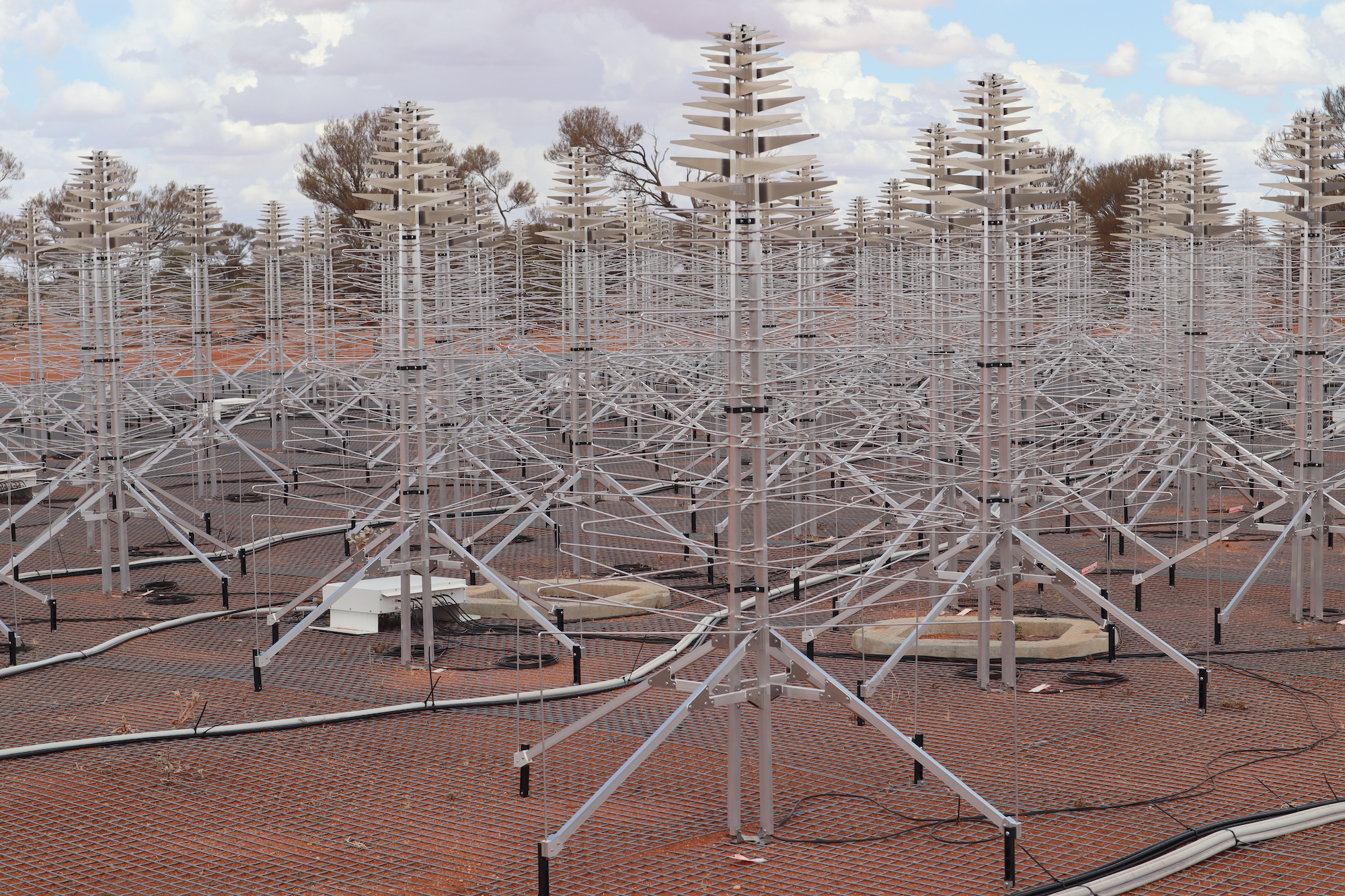

Radio telescopes are perhaps not what we may expect. Instead of a familiar telescope shape, they can be either giant dishes or wiry antennas (looking like old TV aerials).

Radio telescopes today are usually built in giant clusters of either these dishes or antennae, covering large areas of land. The reason behind this is that for radio astronomy, we can play a scientific trick. If we group say 10 x 1m radio dishes together, we can effectively create an equivalent single 10m dish. In theory we could build a gigantic radio telescope made up of hundreds of smaller ones.

This is what the Square Kilometre Array (or SKA) project is trying to do. The SKA project will build and engineer the largest radio telescope, in fact the largest scientific facility, in the history of humankind.

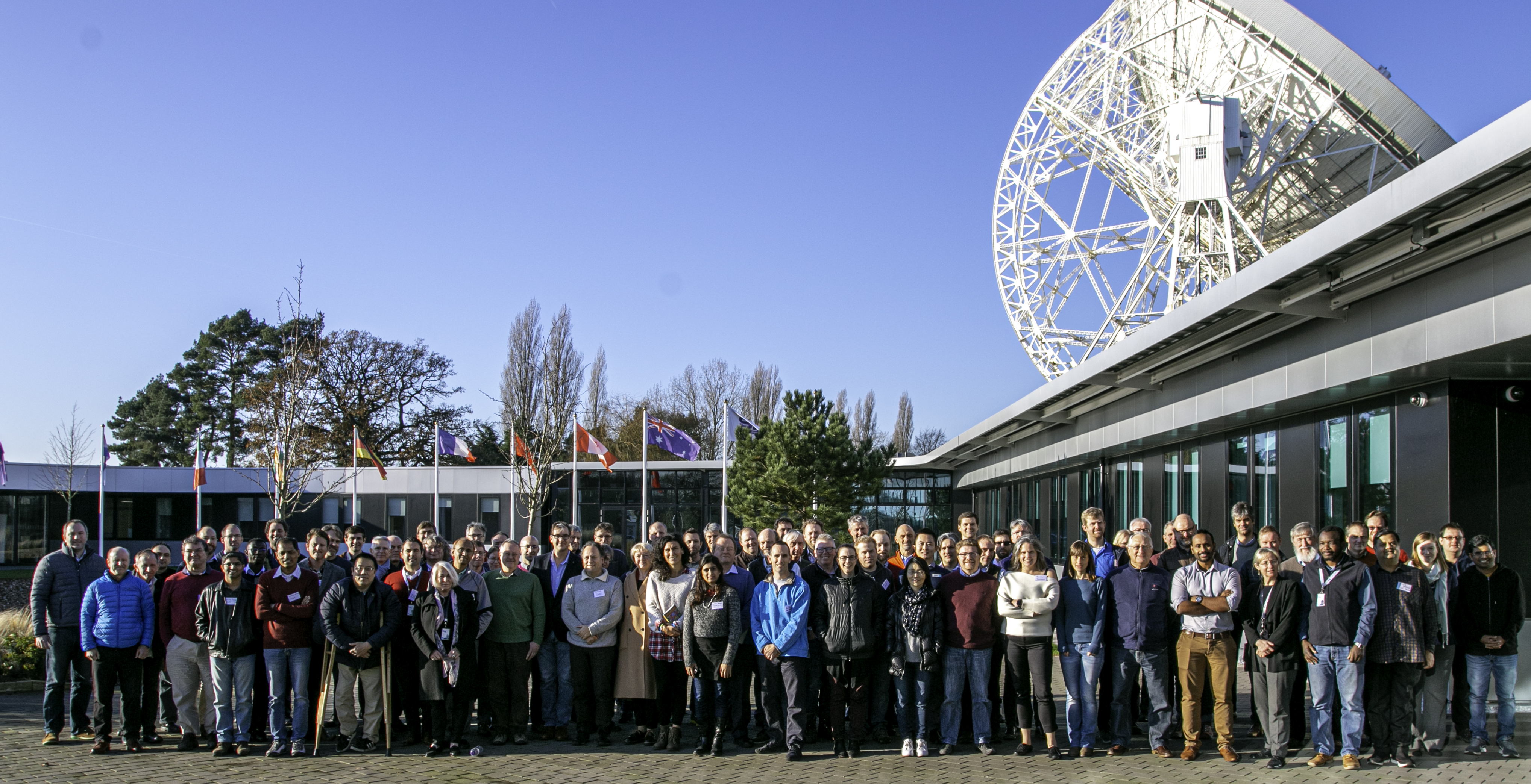

Putting together 1000's of dishes and 100,000's of small antennae, we hope to create an equivalent telescope of a kilometre in size (compare that with the Hubble telescope which is more like 2m). The SKA project is so big that half of the elements are being built at a site in South Africa and the other half in Australia using the remote desert areas to avoid any domestic radio interference. The headquarters will be in the UK at Jodrell Bank, near Manchester.

This sounds like an amazing engineering feat and it certainly is. However, in reality most of the technology is not particularly new. Certainly, the antennae are relatively cheap and just thrown down on the ground in massive congregations. So you may ask, 'Why wasn't the SKA built earlier, say 20 years ago'?

Well, in terms of the dish and antenna technology it could have been built relatively easily. The problem is not with the engineering but with the computing technology. Put simply, there has never been and still is not the computing facilities and power to deal with the vast amount of data that the SKA will produce.

The SKA poses significant challenges in computing design, large scale distribution and computer programming. Computers will need to receive all the data from every single one of the thousands of individual dishes/antennae and sort them or correlate all the signals together and then process the data to produce astronomical radio images of the sky.

To put this challenge in perspective, we need a lot of clever data processing because the antenna arrays in the SKA could produce more than 100 times the current global internet traffic! The data collected by the SKA in a single day would take nearly two million years to playback as music on your iPhone. Therefore we will need to compress (reduce) the data, extract what we need and throw away the rest. The SKA will need to process 10 petabytes (thats 10 million Gigabytes) of data every HOUR.

So how can this miracle of computing be achieved? At RAL Space we are part of a global team that are working to create the Science Data Processor (SDP) of the SKA. The Science Data Processor will include the software that will do the job of taking all that SKA data, carry out the processing and output the results.

We start small with prototypes of code. Much of our code is written in the very familiar Python programming language. By starting small we can work out if the direction with our computing strategy is correct before making anything too big. Flexibility and adaption are the key important factors in our coding. The old way of approaching these kind of challenges was to map out all the work needed to create the BIG result and then to get on with it, delivering the final result at the end. This is now considered a bit old fashioned and also quite risky because what if you do a massive amount of work and then a small mistake is found right at the end which makes all that work a waste of time? So now we go forward in little 'sprints' of software development, trying things out to see if they work. This means that if we find a mistake or a better way of doing something we can easily correct it. This is often referred to as the 'agile' approach to programming.

In the end, once we have our small working version of the software, we can then 'scale up' the entire project to create the Science Data Processor for the SKA!

The SKA is expected to start operations in the 2020's and will transform our view of the radio universe as well as pushing the boundaries of technological and scientific computing development across the world.

Images:

Middle: SKA Low antennas at the Murchison Radio-astronomy Observatory in Australia. Credit: STFC RAL Space/ Chris Pearson

Bottom: SKA meeting at Jodrell Bank which includes the RAL Space members working on SDP - Chris Pearson, Nijin Thykkathu, Brian McIlwrath, Andrew McDermott and Steve Guest. Credit: SKA.